Introduction

Higher education has long framed leadership preparation as an important educational outcome. Within the past three decades, however, scholars and practitioners have paid increasingly focused attention on understanding particular mechanisms, environments, and experiences by which students develop their leadership attributes. Nevertheless, leadership education is a relatively new discipline, experiencing the growing pains of establishing a professional identity, understanding the nature of its interdisciplinarity, and untangling the various contexts in which leadership learning takes place (Guthrie & Jenkins, 2018). These challenges are not unlike, nor unrelated to, the “perennial problems in the ‘language of leadership” (Komives, Dugan, Owen, Slack, & Wagner, 2011) which call into question how leaders and processes of leadership should be defined, assessed, and practiced. Consequently, researchers face challenges in determining what constitutes effective leadership practice, how leaders develop such competencies, and how these competencies might be measured (Andenoro et al., 2013).

Amidst this muddiness, common understandings of effective leadership are beginning to emerge. Drawing on Rost’s (1993) definition of post-industrial leadership, much contemporary leadership education research discusses leadership as a process—which is a distinct differentiation from a leader as a person (Guthrie & Jenkins, 2018; Komives et al., 2011). This process involves active leaders and followers who work ethically and collaboratively to enact positive change. One camp of scholars might assert that the attainment of the desired goal thus measures effective leadership; others suggest that it is merely the authentic engagement of leaders and followers throughout the process that constitutes effectiveness (Rost, 1993). Regardless of goal attainment, effective leadership processes result in the positive development of all group members. Therefore, given that the preparation of future leaders is an important goal in higher education, measuring the effectiveness of teaching such processes should be a primary goal in educational research.

Fundamental Elements of Leadership Capacity

Leadership educators believe that everyone has the potential to increase their capacity for effective leadership. Leadership capacity is commonly thought of as a broad combination of knowledge, skills, and attitudes which enable individuals to engage in the practice of leadership (Dugan, 2017; Guthrie & Jenkins, 2018).

Within a leadership learning framework, leadership knowledge is comprised of theoretical, technical, and humanistic elements (Guthrie & Jenkins, 2018). Technical elements may consist of the understanding of a specific job function, or general knowledge of the principles of a discipline or the workings of an organization. Conversely, human knowledge consists of understanding oneself and others. Theoretical knowledge is acquired through exposure to formal and informal leadership theories, both within and outside of classroom settings. Theoretical knowledge may not play a strong role in determining capacity for effective leadership; however, as most individuals who engage in processes of leadership have never been exposed to formal theories of leadership (Dugan, 2017).

Whereas cognitive and intellectual understanding comprises leadership knowledge, leadership skill is the ability to put this knowledge into action. Similar to the domains of leadership knowledge, leadership skill also occurs in the technical, human, and conceptual spheres (Katz, 1955). A person who possesses technical skill can perform task-related functions. Technical skill is also commonly thought of as the ability to work with things. On the other hand, human skill is the ability to work with people. Effective leaders are sensitive to the needs of team members and know how to work collaboratively with others in pursuit of a common goal. Conceptual skill is considered the most high-order skill. It consists of the ability to work with concepts and ideas. Leaders with strong conceptual abilities possess the skills to understand the big picture, create a compelling vision, think strategically, and consider solutions to complex problems.

Capacity for effective leadership also considers the attitudinal or internal qualities related to leaders and the leadership process. Such internal dimensions may include: the ways one identifies with the labels, language, and identities of leadership (Arminio et al., 2000; Komives, Owen, Longerbeam, Mainella, & Osteen, 2005), the extent to which one possesses confidence in their ability to enact leadership-related behaviors (Hannah, Avolio, Luthans, & Harms, 2008), and the extent to which one feels motivated to engage in positions and/or processes of leadership (Chan & Drasgow, 2001).

An Integrated Conceptual Model of Contemporary Leadership Capacity

Given the pertinent leadership dimensions mentioned above, and the need for contemporary leaders to be able to work collaboratively to solve increasingly complex problems (Rost, 1993; Soria, Snyder, & Reinhard, 2015), a conceptual model of leadership capacity which envisions effective leaders as those who are ready (confident), willing (motivated), and able (skilled) to lead has emerged in recent years (Keating, Rosch, & Burgoon, 2014).

Leadership Readiness. Drawing on Hannah and Avolio’s (2010) research pertaining to leader developmental readiness, Badura’s (1997) work regarding learning efficacy, and Murphy’s (1992) operationalization of leadership self-efficacy, readiness within the Ready-Willing-Able model of leadership capacity (Keating et al., 2014) refers to the degree to which one feels ready to lead, or feels that one’s leadership-oriented behaviors will lead to success. Research has shown that race and gender tend to influence self-efficacy beliefs for college students (Kezar & Moriarty, 2000; Kodama & Dugan, 2013; McCormick, Tanguma, & López-Forment, 2002). Such findings possess important implications, as students with higher leadership self-efficacy attempt to take on leadership roles at significantly greater rates than students with low leadership self-efficacy (McCormick et al., 2002) and that sociocultural conversations with peers and engagement in prior leadership experiences are strong positive predictors of heightened leadership self-efficacy (Kodama & Dugan, 2013; McCormick et al., 2002).

Motivation to Lead. Cognitive motivation refers to the internal process that predicts the direction, intensity, and persistence of behavior (Chan & Drasgow, 2012). Motivation to lead is operationalized as the driving force involved in the decision to assume leadership roles and responsibilities and the intensity with which one exerts effort at leading and persistence as a leader (Chan & Drasgow, 2012). Quantitative studies of student leadership capacity rarely include motivation to lead as a key variable, but qualitative and mixed-methods approaches have indicated that desires to make change in the community, to interact with peers who have similar interests, and to adhere to the advice of mentors can play a role in motivating students to assume leadership roles (Garcia, Huerta, Ramirez, & Patrón, 2017; Harper & Quaye, 2007; Renn & Ozaki, 2010).

Leadership Skill. Leadership motivation and self-efficacy play significant roles in propelling students toward leadership-oriented situations, but leadership capacity also considers the skills needed once they are in a position of influence—whether formal or informal. In particular, transformational leadership skill (Burns, 1978) encompasses the ability to change the nature of organizations, communities, societies, to serve as a role model, and to inspire others toward action. The ability for leaders to set standards, provide discipline when standards are not met, and provide rewards based on performance, typically characterized as transactional leadership skills (Bass, 1985) are also important components of the leadership process. However, transformational and transactional skills are not often explicitly measured in student development research. Studies examining similar kinds of skill development such as collaboration, common purpose, and integrative leadership point to a strong influence of civic engagement/community service, sociocultural conversations with peers, peer mentoring relationships, and formal leadership training on transformational types of leadership skills, in particular (Dugan & Komives, 2010; Soria et al., 2015).

The Ready-Willing-Able (Keating et al., 2014) conceptual framing of leadership capacity has been used to inform recent studies investigating students’ interrelated leader self-efficacy beliefs, motivations for engaging in leadership-oriented behaviors and perceived abilities to lead from transactional and transformational standpoints. This line of research has yielded important insights about the impact of formal leadership program participation on lasting leadership capacity gains for students (Rosch & Collins, 2019), the influence of racially diverse learning environments on motivation to lead for White students and students of color (Collins & Rosch, 2018; Collins, Suarez, Beatty, & Rosch, 2017), and the power of involvement in formal high school organizations in developing motivated, confident, and skilled student organization members in college (Rosch & Nelson, 2019).

Measuring Student Capacity for Leadership: Current Challenges

Measuring each of these outcomes simultaneously; however, has proven difficult for numerous practical and psychometric reasons which we will highlight in this section. The two most significant difficulties are the applicability to any instrument to the broad diversity of areas in which leadership education happens, and the cost/benefit balance of survey length and number of latent constructs that can be measured within an instrument. In response to these issues, we sought to create an instrument supported by rigorous psychometric analysis that would measure broad-based leadership capacity, and would also be concise enough for ease of broad and general use.

The Issue of Context. One issue is that current research tends to focus more on leadership outcomes that align with specific theoretical frameworks or programmatic contexts than on broad-based capacity, making research findings too narrow for cross-context applicability. As Dugan (2017) explains,

Different formal theories emphasize different sets of knowledge, skills, and behaviors that may or may not be transferable among one another. In other words, an individual or group may have high leadership capacity for one formal theory, but little capacity to engage effectively based on the assumptions of another theory (p. 14).

For example, the Full-Range Leadership Development Model, initially proposed by Avolio and Bass (1991) and measured by the Multi-Factor Leadership Questionnaire (MLQ; Bass & Avolio, 2004), describes “transformational leadership” using specific behavioral markers that, while almost inarguably positive, are not universally accepted as a bounded definition of what “transformational” leaders do. Similarly, the Social Change Model of Leadership Development (Higher Education Research Institute, 1996), one of the most popular models of leadership in higher education (Owen, 2012), makes specific claims about the capacity required for “socially responsible leadership” that are not unambiguously accepted across public sectors. These cross-context challenges make creating a broadly accepted measurement challenging. Leadership capacity measures should be broad enough that most educators would find it useful for assessing effectiveness yet specific enough that it provides these educators with actionable information about where they should attend in their efforts in supporting student development.

The Issue of Length. A handful of models have aimed to address the challenge of context-applicability by incorporating several theoretical models into one inventory. Unfortunately, this creates a new problem—namely, scale length. The widely used Multi-Institutional Study of Leadership (MSL), for example, draws on Astin’s (1993) Inputs-Environments-Outputs college impact model and incorporates more than 400 psychometric items and scales related to six existing theoretical models of leadership (https://www.leadershipstudy.net/about#about-msl). As another example, the Emotionally Intelligent Leadership (EIL) Inventory consists of 183 items representing an integrative theoretical model which considers 21 capacities of cognitive processes, personality traits, behaviors, and other competencies (Allen, Shankman, & Miguel, 2012; Miguel & Allen, 2016).

Not surprisingly, longer questionnaires tend to result in lower overall response rates, higher item-nonresponse rates, shorter response-time per item, and less varied responses, especially for items positioned later in the survey (Galesic & Bosnjak, 2009). In addition, participants’ interest in providing well-considered responses wanes as the survey progresses. Leadership education relies heavily on student self-report data. Thus, constructing survey length to optimize response rate and quality is of crucial importance.

Research Purpose

Given these challenges, we sought to create an instrument of leadership capacity that (a) has been psychometrically validated for use with college student populations; (b) includes measures of leadership capacity that are known to be essential to effective leadership practice (leader self-efficacy, motivation, and skill); (c) is broad enough for use across contexts; and (d) is concise. Our research consisted of a two-study design. The goal of the first study was to utilize exploratory factor analysis (EFA) to create an initial survey instrument; we focused the second study on validating the initial instrument using confirmatory factor analysis (CFA) on responses collected from a separate sample of participants.

Study One- Instrument Creation

Population and Sample. Our initial sample consisted of university students who participated in a six-day intensive leadership development experience coordinated by LeaderShape, Inc., known as the “LeaderShape Institute,” in the academic year beginning in the fall of 2014 and ending the spring of 2015. That year, 85 postsecondary institutions hosted an Institute on their campus in the United States. An open call to these institutions yielded 20 campuses which volunteered to participate in the study. These 20 schools were diverse in terms of size, institutional control (public or private), selectivity, and location. We also collected data from four Institutes hosted directly by LeaderShape staff, which included students from across the United States. Our data collection efforts yielded a sample of 1,333 participants who had completed each item in the survey, which represented over 95% of the students who participated across the 24 Institute sessions. This sample was more than large enough for the statistical power required for a rigorous EFA analysis (Ellis, 2010). Approximately 58% of the sample identified as a woman, while 38% identified as a man, and 4% did not report their gender identity. With regard to racial identity, 48% identified as White, 17% as African-American, 12% as Asian-American, 6% as Latinx, and 5% as multi-racial, while 11% did not report their racial identity or could not be coded within the above categories. Approximately 27% of the sample identified as first-year undergraduate students, 27% as sophomores, 29% as juniors, 8% as seniors, 2% as graduate students, and 9% did not report their class standing. Approximately 7% of the sample identified as a non-United States citizen. We felt that this sample of postsecondary students was ideal for this study: a broad and diverse sample of students who have volunteered to participate in a leadership development program – therefore indicating an interest in the topic of leadership – but who had not yet received the benefit of participating in the LeaderShape Institute for which they were registered.

Instrumentation and Data Collection. We collected data after students had registered to participate in the LeaderShape Institute, but before the beginning of the program. We included three separate measures of leadership capacity that have long been in use and measuring, respectively, leadership skill, leader self-efficacy, and motivation to lead. To measure leadership skill, we utilized the Leader Behavior Scale (LBS; Podsakoff, MacKenzie, Moorman, & Fetter, 1990), a 28-item survey that includes two subscales – one focused on transformational leadership skill (LBS_Form) and the other on transactional leadership skill (LBS_Act). The LBS has been in use for almost thirty years in both educational and business settings, with Cronbach alphas ranging from .71 to .89 (Yukl, 2010). In regards to the current sample, we measured Cronbach alphas ranging from .71 to .87.

To measure leader self-efficacy, we utilized the Self-efficacy for Leadership scale (SEL; Murphy, 1992), an 8-item instrument measuring the degree of success a potential leader expects when enacting general leadership-oriented behaviors. The SEL has also been in active use for several years across many contexts, including business and education. Past research indicates Cronbach alpha reliabilities above .80 (e.g., Hoyt, Murphy, Halverson, & Watson, 2003; Murphy & Ensher, 1999), while the current sample yielded an internal reliability of .71.

We measured motivation to lead using Chan and Drasgow’s Motivation to Lead scale (MTL; Chan & Drasgow, 2001). The MTL consists of 27 items equally distributed across three subscales: Affective-Identity (MTL_AI), Non-Calculative (MTL_NC), and Social-Normative (MTL_SN) constructs. The MTL has been in use for almost 20 years and has similarly been implemented in research studies spanning business and educational pursuits. Past research indicated acceptable Cronbach alpha levels, while our current sample yield reliability statistics ranging from .77 to .82.

A benefit of using well-established scales for our purposes is that prior research on each of them had established convergent, discriminant, criterion, and external validity with respect to each of them. Within each of the above scales, response sets consisted of a 5-point Likert scale ranging from “strongly disagree” to “strongly agree.” To decrease the degree of probability of response fatigue that results in non-random patterns within participant responses, we placed survey items in randomized order where items designed to measure the same leadership construct were not grouped.

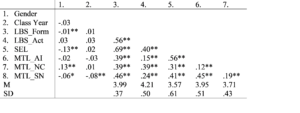

Data Analysis and Results. Before conducting more complex analyses, we first examined the intercorrelations among the relevant variables to determine the degree to which they were related and if an EFA analysis was warranted. The results of this analysis are displayed in Table 1. Our findings suggest that each of the leadership capacity sub-scales was significantly intercorrelated, indicating that exploratory factor analysis should be employed to eliminate items that load too strongly across multiple scales, which would have the additional benefit of reducing the number of overall items (Conway & Huffcutt, 2003).

Table 1

Variable Intercorrelations

*p < .05; **p < .01

Note. LBS_Form = Transformation Leadership Skill (LBS; Podsakoff, MacKenzie, Moorman, & Fetter, 1990), LBS_Act = Transactional Leadership Skill (LBS; Podsakoff, MacKenzie, Moorman, & Fetter, 1990), SEL = Self-Efficacy for Leadership Scale (SEL; Murphy, 1992), MTL_AI = Affective Identity Motivation to Lead (MTL; Chan & Drasgow, 2001), MTL_NC = Non-Calcualtive Motivation to Lead (MTL; Chan & Drasgow, 2001), MTL_SN = Social Normative Motivation to Lead (MTL; Chan & Drasgow, 2001).

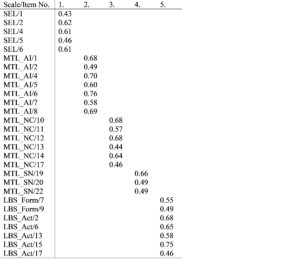

Next, we conducted a principal components analysis with oblique rotation to maximize the degree of separation between items (Conway & Huffcutt, 2003). Given the large amount of correlation between sub-scales, we chose not to constrain the number of factors to six (i.e., the presumed number of discrete sub-scales across the three included instruments). Our analysis resulted in 13 factors emerging with eigenvalues above 1.0, which collectively explained 53% of the variance in participant responses. For parsimony and clarity, we eliminated items that did not possess a loading weight above 0.40, had a difference of less than 0.3 between the first and second factor loading, or that loaded at 0.40 or above onto more than a single factor., aligned with good practice in social science research (Matsunaga, 2010). This decision left 28 individual items across the three discrete leadership scales that each loaded onto one of five latent factors that collectively explained 37% of the overall variance. It is important to note that within these factor loading restrictions, no meaningful difference emerged between LBS items originally designed to load to a transformational leadership behaviors factor and those designed to load to a transactional leadership behaviors factor. Combining the transformational and transactional leadership scales into one omnibus “Leadership Skill” scale left three constructs (leadership skill, leader self-efficacy, and motivation to lead) consisting of five sub-scales measured by the 28 items. These items became the pilot Ready-Willing-Able leadership capacity measure that we employed in Study Two.

Table 2

Exploratory Factor Loadings

Study Two – Instrument Validation

Population and Sample. The population from which we drew the sample for Study Two consisted of students involved in officially registered student organizations at a large, selective, research-extensive public university in the Midwestern region of the United States. In the fall semester of 2016, we sent an open invitation to a diverse group of these organizations in partnership with the administrative office that oversees such groups. We identified them through their strong relationship with that office and therefore expected this group to yield an acceptable response rate. While not a random sample, these organizations were highly diverse in terms of their mission and goals, membership, size, longevity on campus, and organizational structure. Participating groups included those focused on performance and dance, sports, fraternities and sororities, and professions-focused organizations (e.g., Accountancy club). Given that our research was designed to create a rigorously tested comprehensive measure of the effects of postsecondary educational experiences on students’ leadership development, we felt that this diverse sample was ideal for our research purposes. Moreover, the population from which the sample was collected was not in any way associated with the initial population.

A total of 38 student organizations participated, which included 744 total students who completed the entire 28-item survey, more than enough for the statistical power required for our analysis (Ellis, 2010). These participants made up the sample for this part of our study. Of this sample, 66% identified as a woman, 32% as a man, and 2% chose not to identify their gender identity. Approximately 49% identified as White, 33% as Asian-American, 6% as Latinx, and 3% as African-American, and 6% as multi-racial, while 9% could not be categorized within one of those groupings or did not report their racial identity. Approximately 22% identified as freshmen, 27% as sophomores, 22% as juniors, 21% as seniors, and 7% as graduate students, while 1% chose not to identify a class year.

Instrumentation and Data Collection. In addition to the demographic items reported above, our survey instrument consisted of the 28 individual items within the pilot Ready-Willing-Able measure derived from the results of Study One. We sent initial invitations to participate in our research study to the presiding executive officers of those organizations identified by our administrative partners, requesting to attend an organizational meeting that Fall to distribute surveys that included the pilot instrument. Student members who missed the meeting were sent an email invitation to complete an identical version of the survey online.

Data Analysis and Results. In Study One, we reduced the initial 63 items from the three original measures of leadership capacity to 28 items for our pilot instrument using exploratory factor analysis methods. Study Two was designed to utilize confirmatory factor analysis using a different sample of students to test the fit of the hypothesized factor structure with the observed covariance structure of the data collected from our sample of students involved in formal organizations (Brown, 2015). We first tested the 28-item measure, employing maximum likelihood estimation, fitting the five-factor model suggested in our prior analysis. We examined the individual loading statistics for each item as well as overall goodness of fit statistics. Our results indicated that seven individual items possessed low loading weights on their relevant latent constructs, relative to the remaining items. Moreover, the model did not achieve a statistically appropriate representation of the expected underlying constructs, given modern conventions (Hu & Bentler, 1999): χ2340=1824.64, CMIN/DF=5.38, CFI=.83, RMSEA=.08, PCFI=.75. Hu and Bentler (1999) suggest a minimum Comparative Fit Index (CFI) of .95 and a Root-Mean-Square Error of Approximation (RMSEA) of less than 0.8. We decided to investigate if the five-factor structure was inappropriate by conducting the analysis after collapsing the three motivation to lead subscales into a larger general motivation to lead latent factor. The resulting analysis produced even lower loading weights and goodness of fit markers (χ2347 =3232.90, CMIN/DF=9.32, CFI=.67, RMSEA=.11, PCFI=.62). A subsequent analysis where all items were collapsed into a single omnibus “leadership capacity” latent factor yield resulted in even worse fit statistics: χ2350=4281.96, CMIN/DF=12.23, CFI=.56, RMSEA=.12, PCFI=.52.

Given these results, we eliminated the seven items that possessed non-significant factor loadings with respect to their respective latent constructs, resulting in a 21-item instrument. We then conducted a CFA analysis that included the initial five sub-scales. Our results indicated that each item possessed a loading weight onto its respective latent construct of .84 or higher, while the model overall represented a good fit of the expected underlying theoretical shape of the data in all but one of the standard areas of diagnosis (χ2180=748.46, CMIN/DF=4.16, CFI=.92, RMSEA=.06, PCFI=.76). To check if collapsing the motivation-to-lead subscales into a one scale was a more accurate way to represent out data, our subsequent analysis substituting a three-factor structure resulted in inappropriate goodness of fit statistics (χ2186=2079.10, CMIN/DF=11.18, CFI=.72, RMSEA=.12, PCFI=.64). We found similar results found substituting a one-factor omnibus factor (χ2189=3115.57, CMIN/DF=16.48, CFI=.57, RMSEA=.14, PCFI=.51). These results suggest that the three-factor structure differentiating motivation to lead among three subscales and separating motivation, self-efficacy, and skill factors represented an appropriate means by which to measure student leadership capacity.

Discussion

This research initiative was designed to create and test a new psychometric survey instrument that would measure individual leader capacity and was optimized for use in studying the development of leaders. Our complementary goals were to design such an instrument that broadly measures multiple leadership dimensions – leadership skill, leader self-efficacy, and motivation to lead – that was also short enough that future research participants would not be dissuaded from completing it due to its length and the high motivational load imposed upon them. We began with three separate and popular instruments measuring each of the three leadership dimensions – the Leader Behavior Scale (Podsakoff, MacKenzie, Moorman, & Fetter, 1990) measuring leadership skill, the Self-efficacy for Leadership Scale (Murphy, 1992) measuring leader self-efficacy, and the Motivation to Lead scale (Chan & Drasgow, 2001). In an initial study, we employed Exploratory Factor Analysis using responses from a national sample of college students to reduce what was originally 68 items across the three instruments into 28 total items, each independently loading onto only one of the dimensions. In a subsequent study, we employed Confirmatory Factor Analysis employing maximum likelihood estimation using responses from another set of participants at a single institution to eliminate seven more items and increase the explanatory power of each separate sub-scale. Our efforts resulted in a 21-item instrument, which we titled the “Ready, Willing, and Able Leader (RWAL) Scale,” that continued to measure the original leadership dimensions of the initial three scales and also possessed appropriate goodness-of-fit indices.

Interestingly, our results indicated that it would be inappropriate to continue to measure transformational and transactional leadership skill separately, a concerning finding that has persisted in past areas of leadership impact research that have not employed the specialized “Full-Range” construct of transformational leadership (Krüger, Rowold, Borgmann, Staufenbiel, & Heinitz, 2011) developed by Bass and Avolio (1994). Our results suggest that university students who complete surveys of their leadership behaviors may not be able to consciously differentiate between these different leader behavior paradigms with respect to their own leadership style, a finding that has been supported, at least in part, by recent past research using university student participants (Rosch, 2018).

Our results, while initial, are encouraging for leadership educators interested in rigorous yet concise quantitative instrumentation for assessing the overlapping capacities to lead that include the skill one possesses in engaging in leader behaviors, the self-efficacy one possesses to engage in these behaviors, and the motivation one possesses to such engage. The survey was designed using populations of students that were diverse in terms of gender and racial identity, academic class year, program of study, co-curricular interests, and in the case of our initial study, academic background and geographic location.

Implications for Future Research. To be clear, however, several follow-up studies are necessary to further delineate the rigor and usefulness of the Ready, Willing, and Able Leader Scale. Perhaps primarily, the survey needs to be examined in more depth across different educational contexts and examined in relation to other measures of leadership capacity. While our initial study that resulted in creating the shortened instrument was conducted using a diverse population, we were still limited in using a dataset that only included students who had volunteered to participate in a LeaderShape Institute. In addition, while our second study included a broad spectrum of students in terms of their interests, all of them hailed from a single institution. These limitations should not preclude these efforts; indeed, many survey instruments have been built from less sample diversity – but they indicate that the need to further test the scale has not subsided.

Researchers should also consider collecting data from non-college students, such as industry professionals or high school students. We mentioned above the difficulty of translating instruments across contexts, especially in the leadership education environment; these subsequent studies would shed more light on exactly how broad-based the RWAL Scale is. Studies that focus on specific social identity factors are also warranted. For example, do differences in gender or racial identity influence the ways study participants complete the new scale? Differences such as these would require further modification of the survey to make the Scale as accessible, fair, and rigorous as possible for future implementation in leadership education initiatives.

Implications for Leadership Educators. Our research was primarily designed to better aid leadership educators in assessing and then evaluating the impact of their programs on those who participate within them. Presuming future research continues to support the results we found suggesting the validity of the instrument, educators now can assess leadership skill, leader self-efficacy, and motivation to lead in 21 items that would take participants less than five minutes to complete. If so, educators can easily implement pre-posttest designs, retrospective pre-tests, and longitudinal surveying without asking for much time or commitment on the part of participants. Such longitudinal employment would result in allowing researchers additional opportunities to utilize intra-individual multi-level modeling research designs, which is a growing area within leadership impact research.

While the scale does not include other areas of data, such as participant demographics and background or other attributes that research shows are related to leadership capacity, it does provide evaluators the ability to include additional items without unnecessarily extending the time it would take participants to complete a requested survey. Contemporary studies are beginning to suggest that not every leadership program benefits all participants equally (Dugan et al., 2011; Rosch, Ogolsky, & Stephens, 2017). As Owen (2012) implies, increased rigor needs to be applied in assessing and evaluating the impact of these programs.

One particular area in which the RWAL Scale might be especially beneficial is in the assessment of diverse groups of students. Several studies (e.g., Kodama & Dugan, 2013; Rosch, Collier, & Thompson, 2015) indicate that students who differ across several aspects of social identity possess varying levels of self-reported skill, self-efficacy, and motivation to engage in leadership behaviors before being exposed to formal or informal training opportunities. In this context, the RWAL Scale can potentially be employed by university assessment officers to understand the experiences and incoming capacities of enrolled students better.

Summary

We conducted two connected research studies in an effort to create a broad-based and concise quantitative survey instrument, the Ready, Willing, and Able Leader (RWAL) scale that would measure three different areas popular in the assessment of individual leader capacity: leadership skill, leader self-efficacy, and motivation to lead. The first study used Exploratory Factor Analysis on three pre-existing scales, and resulted in a 28-item measure where each item loaded onto only one sub-scale. The second study employed Confirmatory Factor Analysis on a different population of participants, and resulted in a shortened 21-item scale, where each item loaded onto only one sub-scale and the overall scale displayed appropriate goodness of fit statistics.

References

Allen, S. J., Shankman, M. L., & Miguel, R. F. (2012). Emotionally Intelligent Leadership: An integrative, process-oriented theory of student leadership. Journal of Leadership Education, 11, 177–203.

Andenoro, A. C., Allen, S. J., Haber-Curran, P., Jenkins, D. M., Sowick, M., Dugan, J. P., & Osteen, L. (2013). National leadership education research agenda 2013-2018: Providing strategic direction for the field of leadership education.

Arminio, J. L., Carter, S., Jones, S. E., Kruger, K., Lucas, N., Washington, J., … Scott, A. (2000). Leadership experiences of students of color. NASPA Journal, 37(3), 496–510.

Astin, A. W. (1993). What Matters in College? San Francisco: Jossey-Bass.

Bandura, A. (1997). Self-Efficacy: The Exercise of Control. New York: W.H. Freeman and Company.

Bass, B. M. (1985). Leadership and performance beyond expectations. New York, NY: Free Press.

Bass, B. M., & Avolio, B. J. (1991). The multifactor leadership questionnaire: Form 5x. Binghamton, NY.

Bass, B. M., & Avolio, B. J. (1994). Improving organizational effectiveness through transformative leadership. Thousand Oaks, CA: Sage.

Bass, B. M., & Avolio, B. J. (2004). Multifactor leadership questionnaire: Manual leader form, rater, and scoring key for MLQ (Form 5x-Short). Redwood City, CA.

Brown, T. A. (2015). Confirmatory factor analysis for applied research (2nd ed.). New York, NY: Guilford Press.

Burns, J. M. (1978). Leadership. New York, NY.

Chan, K.-Y., & Drasgow, F. (2001). Toward a theory of individual differences and leadership: Understanding the motivation to lead. Journal of Applied Psychology, 86(3), 481–498.

Collins, J. D., & Rosch, D. M. (2018). Longitudinal leadership capacity growth among participants of a leadership immersion program: How much does structural diversity matter? Journal of Leadership Education, 17(3). https://doi.org/10.12806/V17/I3/R10

Collins, J. D., Suarez, C. E., Beatty, C. C., & Rosch, D. M. (2017). Fostering leadership capacity among black male achievers: Findings from an identity-based leadership immersion program. Journal of Leadership Education, 16(3), 82–96.

Conway, J. M., & Huffcutt, A. I. (2003). A review and evaluation of exploratory factor analysis practices in organizational research. Organizational Research Methods, 6(2), 147–168. https://doi.org/10.1177/1094428103251541

Dugan, J. P. (2017). Leadership theory: Cultivating critical perspectives. San Francisco, CA: Wiley.

Dugan, J. P., Bohle, C. W., Gebhardt, M., Hofert, M., Wilk, E., & Cooney, M. a. (2011). Influences of Leadership Program Participation on Students’ Capacities for Socially Responsible Leadership. Journal of Student Affairs Research and Practice, 48(1), 65–84. https://doi.org/10.2202/1949-6605.6206

Dugan, J. P., & Komives, S. R. (2010). Influences on college students’ capacities for socially responsible leadership. Journal of College Student Development, 51(5), 525–549.

Ellis, P. D. (2010). The essential guide to effect sizes: Statistical power, meta-analysis, and the interpretation of research results. New York: Cambridge University Press.

Galesic, M., & Bosnjak, M. (2009). Effects of questionnaire length on participation and indicators of response quality in a web survey. Public Opinion Quarterly, 73(2), 349–360. https://doi.org/10.1093/poq/nfp031

Garcia, G. A., Huerta, A. H., Ramirez, J. J., & Patrón, O. E. (2017). Contexts that matter to the leadership development of Latino male college students: A mixed methods perspective. Journal of College Student Development, 58(1), 1–18. https://doi.org/10.1353/csd.2017.0000

Guthrie, K. L., & Jenkins, D. M. (2018). The role of leadership educators: Transforming learning. Charlotte, NC: Information Age Publishing.

Hannah, S. T., & Avolio, B. J. (2010). Ready or not: How do we accelerate the developmental readiness of leaders? Journal of Organizational Behavior, 31(8), 1181–1187. https://doi.org/10.1002/job.675

Hannah, S. T., Avolio, B. J., Luthans, F., & Harms, P. D. (2008). Leadership efficacy: Review and future directions. The Leadership Quarterly, 19(6), 669–692. Retrieved from http://www.sciencedirect.com/science/article/pii/S1048984308001276

Harper, S. R., & Quaye, S. J. (2007). Student Organizations as Venues for Black Identity Expression and Development among African American Male Student Leaders. Journal of College Student Development, 48(2), 106–128.

Higher Education Research Institute. (1996). A social change model of leadership development (Version III). Los Angeles: University of California Higher Education Research Institute.

Hoyt, C. L., Murphy, S. E., Halverson, S. K., & Watson, C. B. (2003). Group leadership: Efficacy and effectiveness. Group Dynamics: Theory, Research, and Practice, 7(4), 259–274.

Hu, L., & Bentler, P. M. (1999). Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Structural Equation Modeling: A Multidisciplinary Journal, 6(1), 1–55. https://doi.org/10.1080/10705519909540118

Katz, R. L. (1955). Skills of an effective administrator. Harvard Business Review, 33(1), 33–42.

Keating, K., Rosch, D. M., & Burgoon, L. (2014). Developmental readiness for leadership: The differential effects of leadership courses on creating “ready, willing, and able” leaders. Journal of Leadership Education, 13(3), 1–16. https://doi.org/1012806/V13/I3/R1

Kezar, A., & Moriarty, D. (2000). Expanding our understanding of student leadership development: A study exploring gender and ethnic identity. Journal of College Student Development, 41, 55–68.

Kodama, C. M., & Dugan, J. P. (2013). Leveraging leadership efficacy for college students: Disaggregating data to examine unique predictors by race. Equity & Excellence in Education, 46(2), 184–201. https://doi.org/10.1080/10665684.2013.780646

Komives, S. R., Dugan, J. P., Owen, J. E., Slack, C., & Wagner, W. (2011). The handbook for student leadership development. San Francisco: Jossey-Bass.

Komives, S. R., Owen, J. E., Longerbeam, S. D., Mainella, F. C., & Osteen, L. (2005). Developing a leadership identity: A grounded theory. Journal of College Student Development, 46(6), 593–611.

Krüger, C., Rowold, J., Borgmann, L., Staufenbiel, K., & Heinitz, K. (2011). The discriminant validity of transformational and transactional leadership. Journal of Personnel Psychology, 10(2), 49–60. https://doi.org/10.1027/1866-5888/a000032

Matsunaga, M. (2010). How to factor-analyze your data right: Do’s, don’ts, and how-ho’s. International Journal of Psychological Research, 3(1), 97-110.

McCormick, M. J., Tanguma, J., & López-Forment, A. S. (2002). Extending self-efficacy theory to leadership: A review and empirical test. Journal of Leadership Education, 1(2), 34–49.

Miguel, R. F., & Allen, S. J. (2016). Report on the validation of the Emotionally Intelligent Leadership for Students Inventory. Journal of Leadership Education, 15(4), 15–32. https://doi.org/10.12806/V15/I4/R2

Murphy, S. E. (1992). The contribution of leadership experience and self-efficacy to group performance under evaluation apprehension.

Murphy, S. E., & Ensher, E. A. (1999). The effects of leader and subordinate characteristics in the development of leader-member exchange quality. Journal of Applied Social Psychology, 29(7), 1371–1394. https://doi.org/10.1111/j.1559-1816.1999.tb00144.x

Owen, J. (2012). Examining the design and delivery of collegiate student leadership development programs: Findings from the Multi-Institutional Study of Leadership (MSL-IS), a national report. Washington, DC: Council for the Advancement of Standards in Higher Education.

Podsakoff, P. M., MacKenzie, S. B., Moorman, R. H., & Fetter, R. (1990). Transformational leader behaviors and their effects on followers’ trust in leader, satisfaction, and organizational citizenship behaviors. The Leadership Quarterly, 1(2), 107–142. https://doi.org/10.1016/1048-9843(90)90009-7

Renn, K. A., & Ozaki, C. C. (2010). Psychosocial and leadership identities among leaders of identity-based campus organizations. Journal of Diversity in Higher Education, 3(1), 14–26. https://doi.org/10.1037/a0018564

Rosch, D. M. (2018). Examining the (lack of) effects associated with leadership training participation in higher education. Journal of Leadership Education, 17(4), 169–184. https://doi.org/10.12806/V17/I4/R10

Rosch, D. M., Collier, D., & Thompson, S. E. (2015). An exploration of students’ motivation to lead: An analysis by race, gender, and student leadership behaviors. Journal of College Student Development, 56(3), 286–291. Retrieved from http://muse.jhu.edu/journals/csd/summary/v056/56.3.rosch.html

Rosch, D. M., & Collins, J. D. (2019). Peaks and valleys: A two-year study of student leadership capacity associated with campus involvement. Journal of Leadership Education, 18(1), 68–85. https://doi.org/10.12806/V18/I1/R5

Rosch, D., & Nelson, N. (2019). The differential effects of high school and collegiate student organization involvement on adolescent leader development. Journal of Leadership Education, 17(4), 1–16. https://doi.org/10.12806/v17/i4/r1

Rosch, D., Ogolsky, B., & Stephens, C. M. (2017). Trajectories of student leadership development through training: An analysis by gender, race, and prior exposure. Journal of College Student Development, 58(8). https://doi.org/10.1353/csd.2017.0093

Rost, J. C. (1993). Leadership for the twenty-first century. Westport, CT: Greenwood Publishing Group.

Soria, K., Snyder, S., & Reinhard, A. P. (2015). Strengthening college students’ integrative leadership orientation by building a foundation for civic engagement and multicultural competence. Journal of Leadership Education, 14(1), 55–71. https://doi.org/10.12806/v14/i1/r4

Yukl, G. (2010). Leadership in organizations (7th ed.). Upper Saddle River, NJ: Prentice Hall.